Estimation the minimal numbers and positions of cameras for a 3D reconstruction for a microsurgery scene based on a 3D reference object regarding the anatomical structure of typical microsurgery scenes

- type:Bachelor thesis

- tutor:

- person in charge:

-

Motivation

Today surgery microscopes are the gold-standard in microsurgery applications such as ophthalmic, neurosurgery, otorhinolaryngology, gynecology, plastic surgery and recently in dentistry. Even though the state-of-the-art optomechanical technology is robust, reliable surgical visualization systems still have a number of limitations according to usability requirements and the need for interpretation into a future fully-digital visualization workflow. For example, it is nearly impossible that more than two observer views can be realized in a currently available commercial surgery microscope. Furthermore, multi-observer positions are mostly not independed from each other requesting the same image quality as in a single-observer case. A state-of-the-art microscope allows only an overlay of pre-recorded patient data over the current surgery scene. Therefore, a realtime data fusion is not achieved. Those are only a few limitations on presently available surgery microscopes.

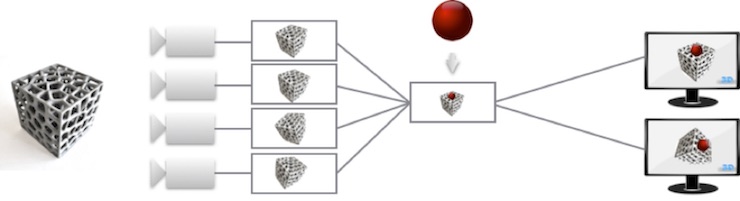

To reduce the above-mentioned limitations, an experimental fully-digital surgery microscope will be developed at Institute of Biomedical Engineering. Fully digital means that the visualization for imaging comes now from a fully rendered 3D model, which will be reconstructed from the surgery scene by using a multi-camera setup. The complete setup is divided into several parts: the optical recording system, the illumination system, the algorithm for 3D reconstruction, and multi-observer visualization.

Project description

Conception and design a 3D object regarding the anatomical structure of typical microsurgery surgical scenes. Develop a framework for estimation the number and positions of cameras regarding of working distance, perceptual covering of the 3D object, sensor size and possible space where the camera can be placed. The principals of a pinhole camera would do the point mapping between 3D object and image sensor.